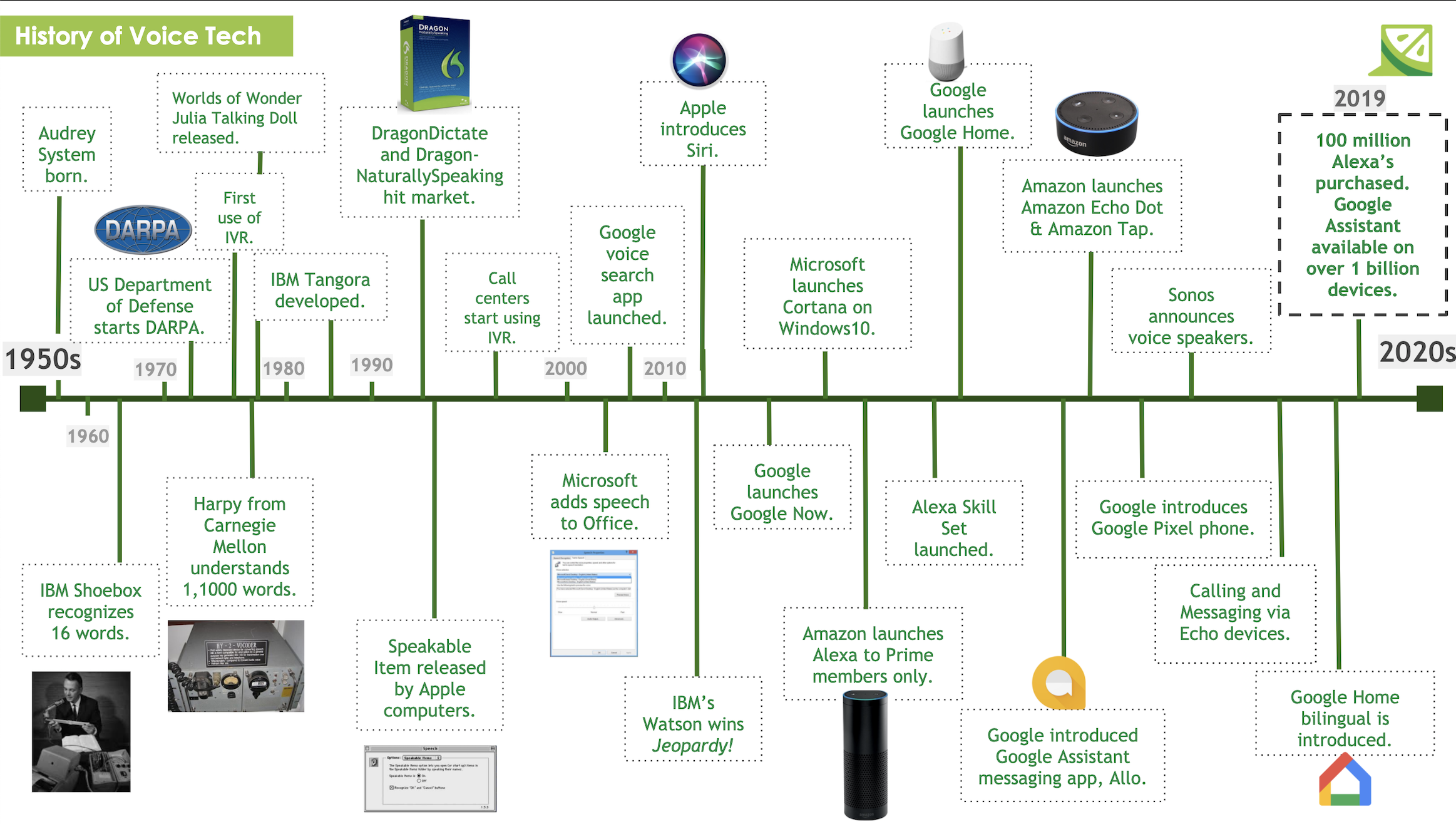

A ‘Voice First’ AI allows users to navigate through the UI using their own voice as the primary input method. It ushers in a new era of technology - moving away from using only the traditional screen, and blends in the use of voice recognition.

Voice CUI Examples

Here are some examples of how a ‘Voice First’ UI is emerging in today’s world:

- Google’s Tacotron 2: A neural network architecture for speech synthesis directly from text, this technology is considered to have near human accuracy. A key aspect of the new service is its ability to understand and speak colloquially. This drives another key factor: the human user’s actual ‘comfort’ in speaking and sharing with the app.

- Cisco Spark Assistant: Spark allows users to set-up meetings, invite attendees, schedule rooms and technologies, etc.

- Amazon Alexa - Alexa is an intelligent personal assistant, it is set to play in Amazon Fire, groceries, and all of the shopping & search categories - collecting a single user’s behaviors across many market verticals.

- Google Voice Search: This is a Google product that allows a user to use Google Search by speaking into their device.

- Automotive: In relation to safety, users are able to avoid distractions by using their hands, fingers, eyes elsewhere besides driving the car.

These are just a few examples of how a ‘Voice First’ UI is being used in today’s world. It is becoming a strong proponent of modern day technology, and the list will only continue to grow.

READ MORE: The State of AI by Industry, What's The Difference Between VR, AR and MR?, What Does Responsive and Adaptive Design Mean?, Tamagotchi Gestures and UX Design, What You Should Know About ZeroUI

Comments

Add Comment