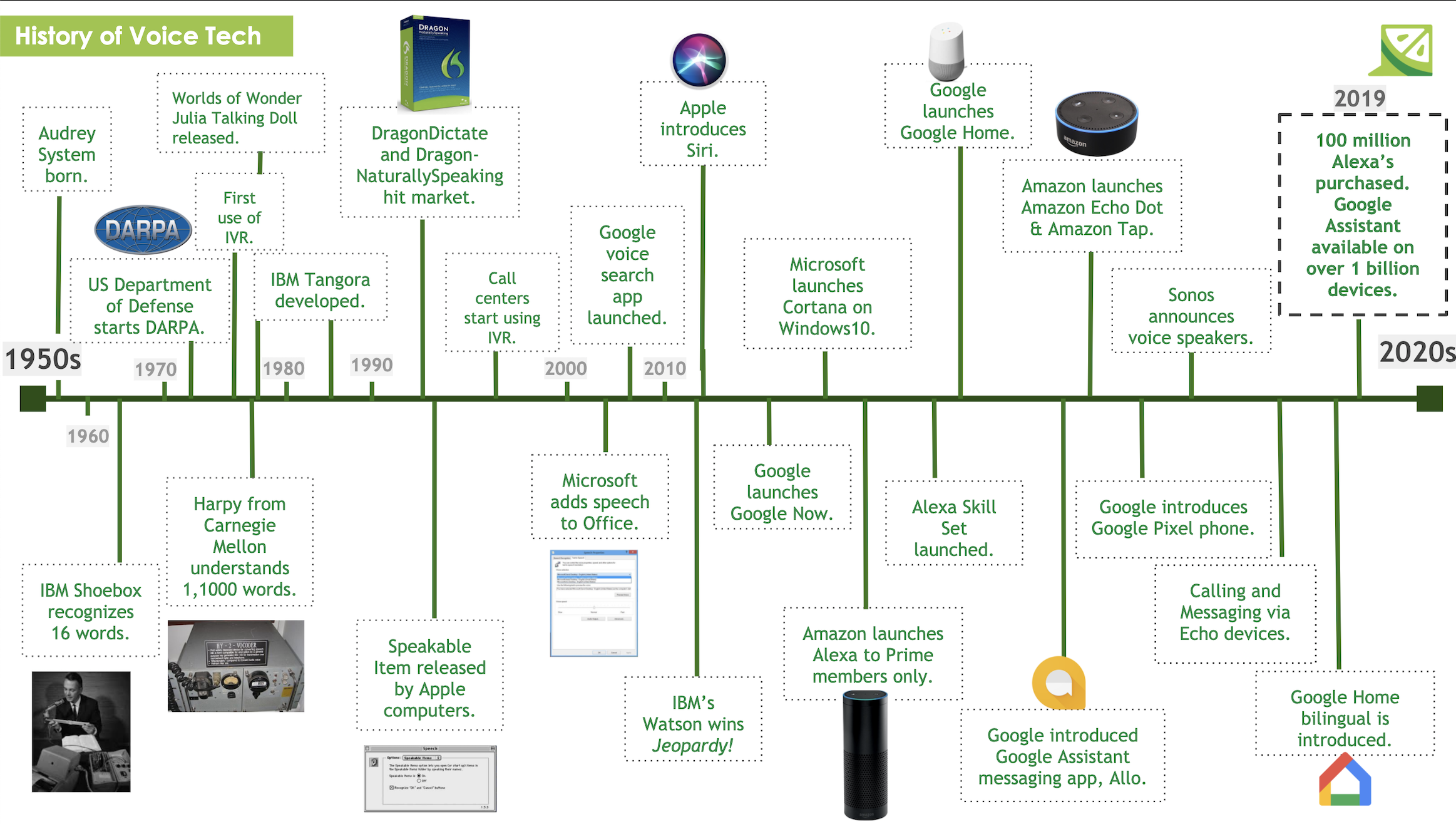

In recent years, automated speech recognition (ASR) systems have become an integral part of many applications; creating a world of virtual assistants (VAs) who live in our mobile devices, smart home applications, and vehicle-systems. What makes ASR systems special is its ability to quickly and accurately convert spoken language to text. For speech recognition to work requires sophisticated machine-learning algorithms capable of converting all of the nuances and complexities of spoken language into actionable commands in real-time. This is possible thanks to deep learning algorithms ability to digest large datasets and create new strategies for improved accuracy.

Despite this rather mechanical process, racial bias is a major issue not only in speech recognition but in other advancing technologies. There is evidence of racial biases in areas such as machine learning, facial recognition, natural language processing, online advertising, and in risk prediction for criminal justice, healthcare, and child services. In most cases, these biases are not overtly expressed but rather the unintended consequence of providing machine learning algorithms with datasets that are not representative of the entire population. In more extreme cases, when machine learning algorithms are given the chance to train freely via the Internet, devastating consequences can ensue.

Machine Learning Systems

A few years ago, for example, a Twitterbot named Tay was programmed to be totally naive and ready to learn about human conversation. Through machine learning systems, she was able to integrate input from others and emulate conversational patterns. What seemed like a good idea at first quickly devolved. By the end of her first day online, Tay was spewing alt-right propaganda, racist comments, and disturbing social commentary. Tay’s makers note that it’s the loudest voices who are often the most volatile and the least informed, and when it comes to AI their influence is a real issue. How do you then teach a machine to acquire knowledge online and also deal with instances where the loudest and most response provoking comments need to be taken with a grain of salt?

This is not just something happening with chatbots, but racial biases are also found in many of the most popular voice assistant products (i.e. Conversational UIs) on the market. A study at Stanford in 2020 found that Amazon, Apple, Google, IBM, and Microsoft ASR tools all exhibited some racial disparities, finding that, on average, they misidentified 35% of the words spoken from African Americans. In addition, only about 2% of white speakers’ audio snippets were considered unreadable and this jumped up to 20% for African American speakers. In a similar study, Tatman and Kasten (2017) examined dialect and gender biases in Youtube’s automatic captioning and found that error rates for sets of matched phrases were twice as high for African Americans relative to white speakers (3). Indicating that racial disparities are not specific to the type of word being used, but to differences in pronunciation (e.g., rhythm, pitch, syllable accenting, vowel duration) and therefore require additional datasets from more diverse groups of people to train with.

Improvements to Voice Technology

Despite dramatic improvements in the technology, automatic speech recognition systems continue to struggle to maintain high accuracy in the face of sociolinguistic variation. This is particularly troubling given that African Americans and other non-white English speakers who use various regional dialects are those who are also typically most vulnerable to other forms of discrimination. One reason for this racial disparity may reflect a failure of the people designing the technology to anticipate experiences of people different from themselves. In light of this, how do you teach critical thinking to a machine, without constraining it with your own myopic perspectives?

While some differences in error rates may not seem like a big deal for some, however, imagine constantly being told that your way of speaking isn’t compatible with the software that your more affluent neighbors don’t seem to have trouble with. This itself becomes a constant microaggression making it considerably harder for African Americans to benefit from the increasingly widespread use of speech recognition technology. Another disadvantage is the impact to someone’s livelihood if speech recognition is used by potential employers to automatically evaluate candidate interviews. Or if used by the criminal justice system to automatically transcribe courtroom proceedings.

As voice technologies only continue to grow and become more embedded in our society, it is critical now that we recognize and work to alleviate racial biases. It’s just as much our responsibility to use our tools prudently as it should be the responsibility of developers and engineers to account for their own myopic thinking when building the technology. One potential way to help mitigate this is to ensure that as a developer you are always using a diverse training dataset that is reflective of the entire population. This will help to reduce performance discrepancies and help ensure speech recognition technology is inclusive.

If left unchecked, biased algorithms can have a disparate impact on certain groups of people without the programmer’s intention. Something that is currently being developed is an algorithmic bias detection and mitigation best practices to create a standardized way to help reduce racial biases across emerging technologies. Understanding and working to prevent racial biases in voice technologies is critical as it is reshaping the world around us and has to be fair and equal for all of its users, not just those who fit within the same demographics as those creating it.

Watch Our EmTech Masterclass: Voice Tech Talk

Learn more about the human aspect of Voice Technology from one of our recent EmTech Masterclass sessions, Voice Tech Talk, and stay tuned for our next session coming soon!

READ MORE: What Are We Talking About? Voice Technology Terms You Need to Know, Voice as Assistive Technology

Comments

Add Comment