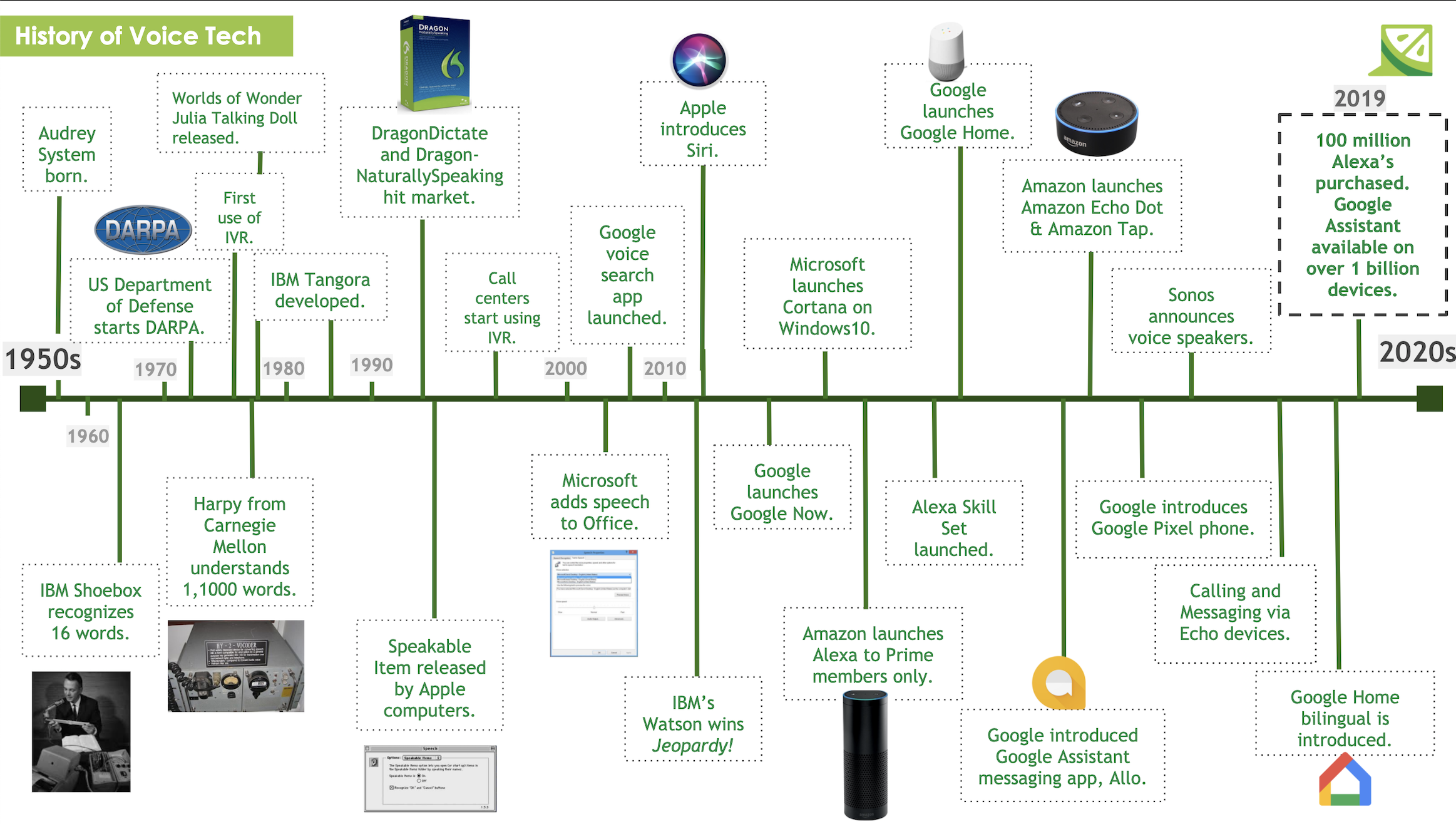

Last week, we discussed the way in which voice technology has transformed from a nascent technology relegated to the world of science fiction, to something that people increasingly utilize on a day to day basis. As we delve deeper into the world of voice technology, there is a great deal of jargon and terminology to keep straight. In order to help make that task a little easier, and so you all know what we are talking about, we have created this handy guide to breakdown and help you understand the most commonly used voice technology vocabulary.

Voice Technology Terms

For starters, when we are talking about voice-technology we are typically talking about a user interacting with a Voice-User Interface (VUI), which is an interface that a user can interact with using voice or speech commands. Voice User Interfaces often get confused for Conversational User Interfaces (CUI) since both have a similar goal of being able to emulate a human conversation between a user and their device. The big difference between VUI’s and CUI’s is the way in which that human conversation is mimicked- VUI’s interact exclusively with voice, whereas CUI’s is referring to the broader group of interfaces emulating conversations including chatbots and IVRs as well. Amazon Alexa, Google Assistant, Siri, and Cortana are all examples of VUI’s and also virtual assistants. Virtual Assistants, or AI Assistants, are systems that have the ability to carry out a variety of tasks or services based on a command or question given by the user.

This assistant becomes a Voice Assistant or Speech Assistant when those commands or questions are administered via the user’s voice. Chatbots are programs that automate conversations on a web page or an instant messenger- you’ve most likely encountered a chat-bot as a customer service alternative on your auto insurance app. An interactive voice response system (IVRS) is a computer-automated telephone system that can interact with callers by providing an automated response, gathering information, or routing calls to the correct place. Aside from these systems, most folks are used to interacting with a VUI in the form of the speech-to-text functionality that is available on most smartphones, computers, and tablets nowadays. Speech-to-text communication takes the spoken word of the user and translates into text on their devices. Additionally, users are now able to utilize text-to-speech functionalities, where they can type text on their device and have it spoken back to them.

The ways in which voice technology can be implemented into devices can take a variety of forms. Earlier we mentioned a type of virtual assistant- the smart speaker. Smart speakers (ie. Alexa and Google Home) are types of speakers that have a virtual assistant that is engaged by voice command functionality. Part of the consumer allure of smart speakers is the ability for users to interact with the devices solely with their voice- including even activating the device. Smart Speakers are activated by a particular word or phrase, often referred to as a wake word or a trigger word. For Alexa, the wake word is saying the name “Alexa” out loud. After using the wake word, the device is activated and ready to carry out tasks for the user. Intents are tasks that the virtual assistant can do for you- for example, “Hey Alexa, order me another Alexa on Amazon.” In this instance, the words “order” and “Amazon” are the intents. In order for the virtual assistant to confirm that they understood the correct intents, the virtual assistant will then repeat part or all of the intent in order to receive either implicit or explicit confirmation. In this instance, Alexa could seek confirmation by explicitly asking, “You wanted me to order an Amazon Alexa off of Amazon, right?” or implicitly by saying “What kind of Amazon Alexa?”. Once done interacting with a virtual assistant, an exit command is what signals to the virtual assistant to stop or exit the interaction- ie. “Alexa, cancel.”

Understanding your Voice Technology

The technology that is used in Smart Speakers like Alexa relies on natural language processing (NLP) systems in order to understand a human’s natural language. When designed effectively, these systems are able to interact with their users “naturally” by being able to understand what is being said to it, determine the appropriate response based on what was said, and respond back to the user in a way that makes sense. When we are talking about conversational UIs, such as chatbots, those rely more specifically on natural language understanding (NLU), which unlike NLP, focuses on machine reading or reading comprehension. NLU systems are a bit trickier since they less adept at handling the intricacies of human speech, such as swapped words, colloquialisms, or contractions, and have more difficulty trying to decipher these inputs.

When it comes to the wonderful world of voice technology, there are a great deal of technical terms utilized to highlight all of the intricacies and nuances that make it unique. This article provides a starting basis for the most commonly used phrases and terms when it comes to voice technology. Now that you know what we are talking about, be sure to stay tuned for more voice technology blog articles!

READ MORE: What is Conversational UI?, When to Use ZeroUI: A Researcher’s Perspective, Designing a Conversational UI Experience: Conversation Basics, Voice Recognition as Biometric Authentication

Comments

Add Comment